You of course make a fine point Andrej - I was being a smart ass

. It's a concern no matter what the vehicle if the driver/ rider does not have their attention to the road.

We have the utmost respect for skilled and safe drivers of large vehicles, we towed/ toured a twin axle caravan about for 2.5 years a while back, with a large van. It certainly made us appreciate considerate drivers, of whom there seem to be fewer and fewer.

I'm not sure what it's like in your part of the world, but my wife and I are noting more and more that we see trucks cross t he white line, swerve, near miss, etc. Looking at them as we pass (at least 1 lane width wide of them!) it's quite shocking what you can see. i.e. driver with elbows on the wheel, using both thumbs to 'control' their phone!

I imagine this is an example in most countries. An argument for vehicle automation perhaps?

I saw a report just this morning that had me thinking. A legal specialist was describing how currently the law defines 'whomever is in control' on the vehicle as responsible for an incident involving that vehicle (i'm simplifying of course). The question being raised as who is liable if a vehicle is automated, driver, car manufacturer, software writer..? if you were involved in a collision with one, which would you bring legal action against?

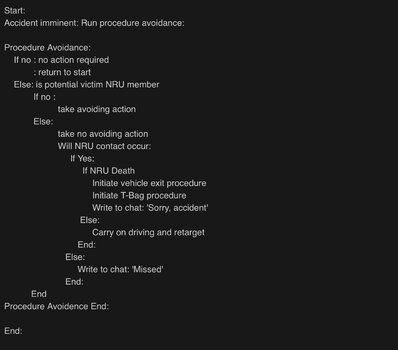

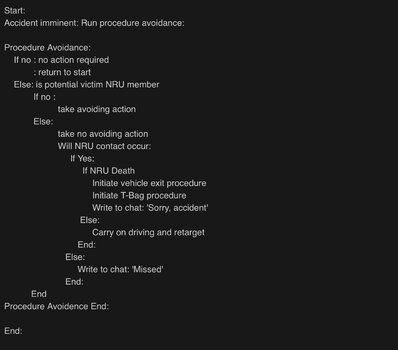

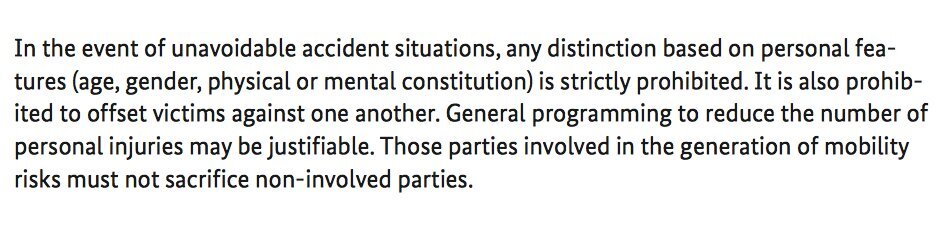

They were testing automated vehicles in Switzerland for an insurance company, on a military base. Essentially trying to figure out how automated vehicles would affect insurance. What it did illustrate though how do you write software effectively makes life and death decisions potentially. The example was a quad bike, illegally overtaking a car. Another car was travelling in the opposite direction towards the car/ quad bike. In this case a collision was inevitable, not enough braking distance to stop any of the vehicles involved. Does the software tell the automated travelling toward the car/ quad bike to: apply brakes, knowing it will hit the quad bike, risking death of the quad driver? Or does it brake, swerve and hit the car, potentially reducing the risk of death as car/ car impact is statistically less likely to result in death?

If it were the second option, this raises several questions, I guess the most basic being who is worth the risk? Also, it asks the software to make a decision about risk based on the information it is aware of. What it may or may not be aware of is if the car/ impact would affect vehicles travelling in the same direction as the car/ quad bike, just behind them. If the car/ car impact occurs, then would this result in risking further cars/ bikes/ lorries/ whatever potentially colliding with the resulting incident.

Personally, it's making my brain hurt. however, it does make me wonder what's better, software with maybe some ethics that we don't all agree with, or people, fully in control, potentially drunk, on drugs, playing with their phone, angry, talking, tired, ill...

Anyway, I'm off to shoot some virtual soldiers... a healthy pass time for any adult male